.png)

The average research team manages five to seven tools just to go from "we need to talk to customers" to "here's what we learned." One for recruiting. One for scheduling. One for recording. One for transcription. One for analysis. Maybe another for the repository. And they're all made by different companies that don't talk to each other.

AI tools for UX research are platforms that use AI to automate recruiting, transcription, analysis, interviewing, and insight synthesis across the research lifecycle. The ones worth paying attention to collapse the number of tools you need, from five or six down to one or two.

This guide covers eight platforms, organized by what they actually solve.

Every AI research tool promises speed. Few deliver on the workflow problem. We focused on five criteria:

One note on transparency: Great Question is on this list. We'll be direct about where it fits and where other tools are a better pick for specific use cases.

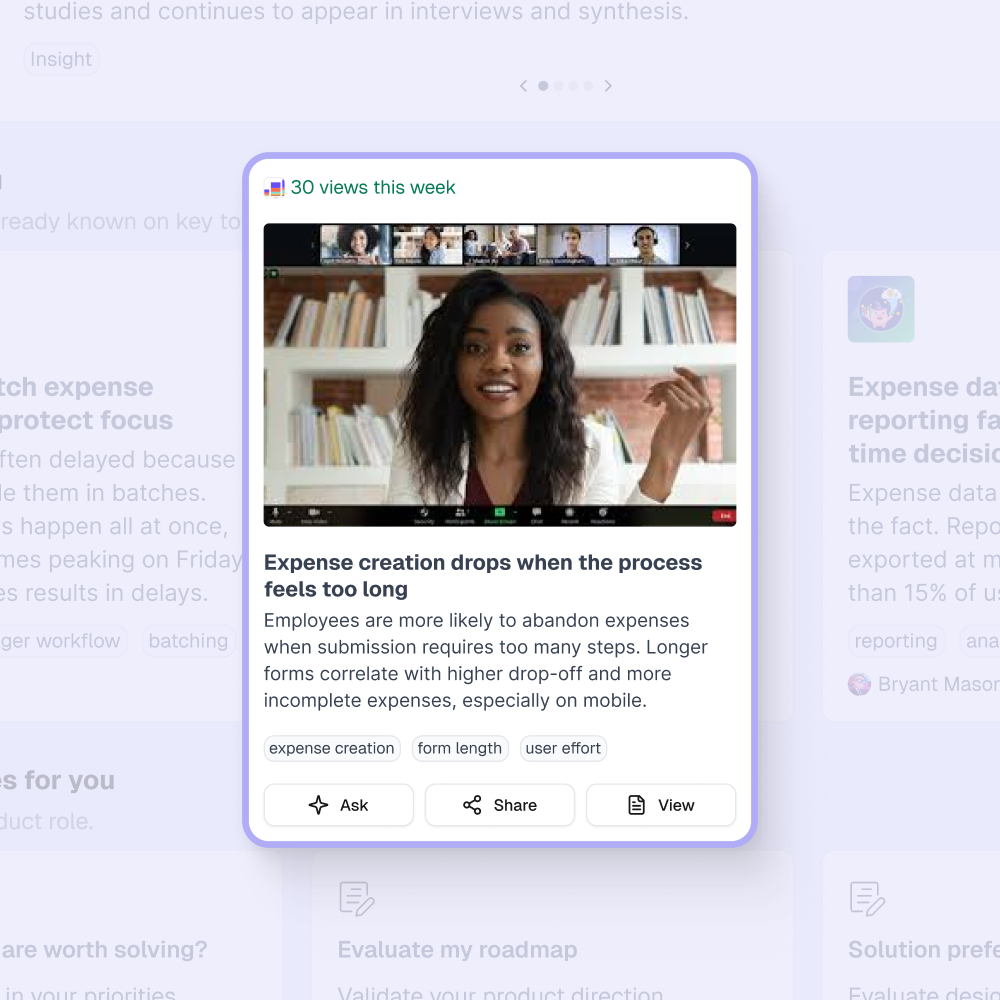

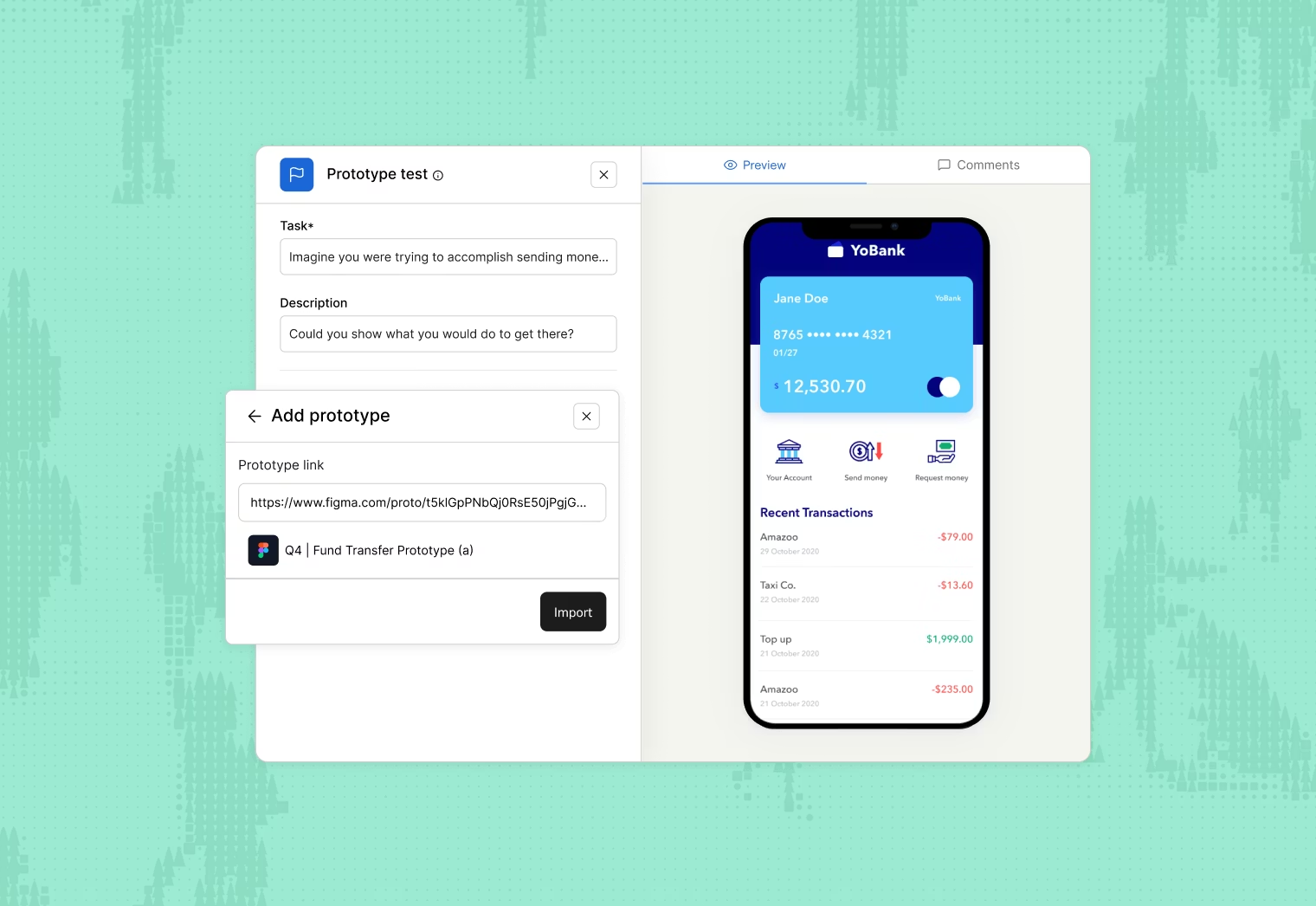

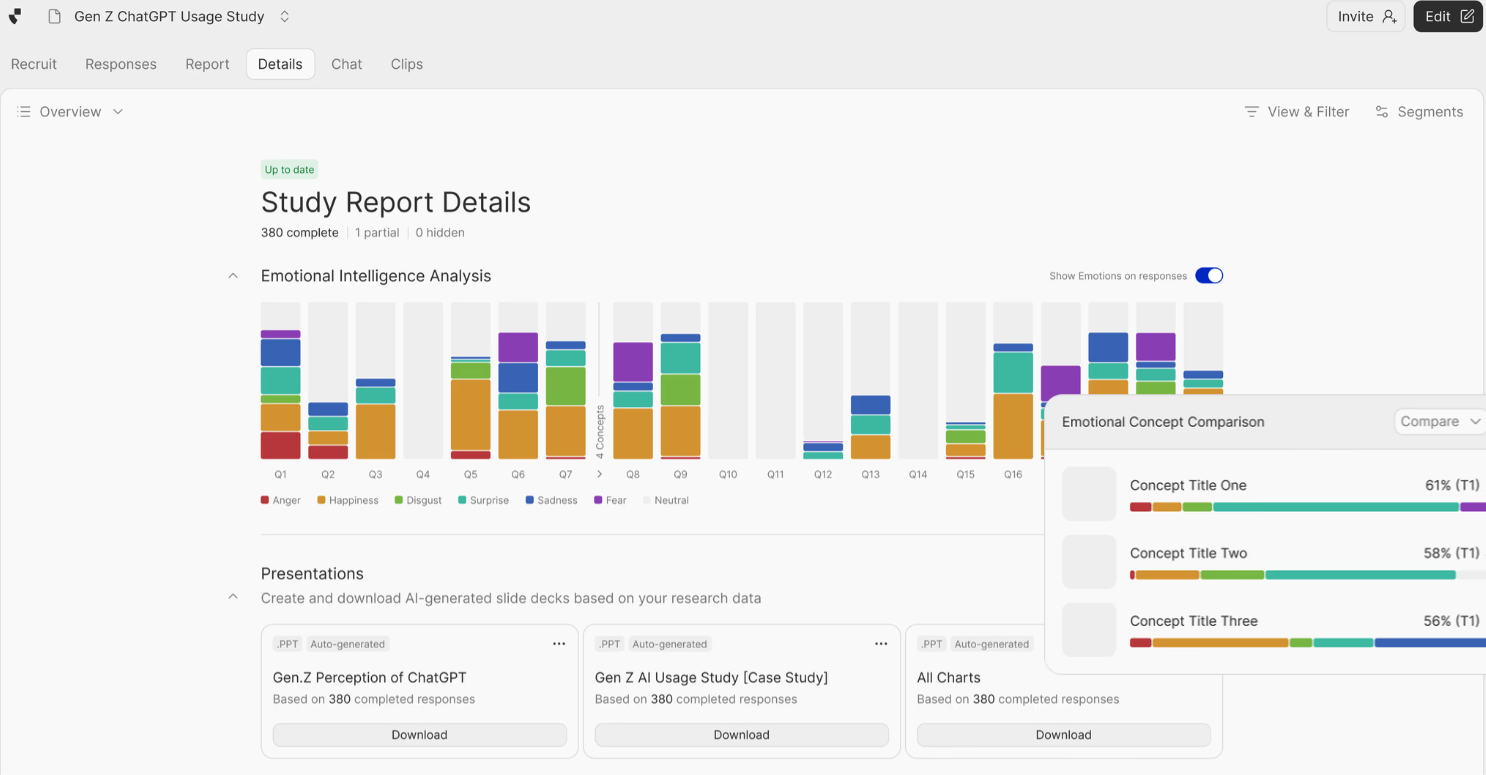

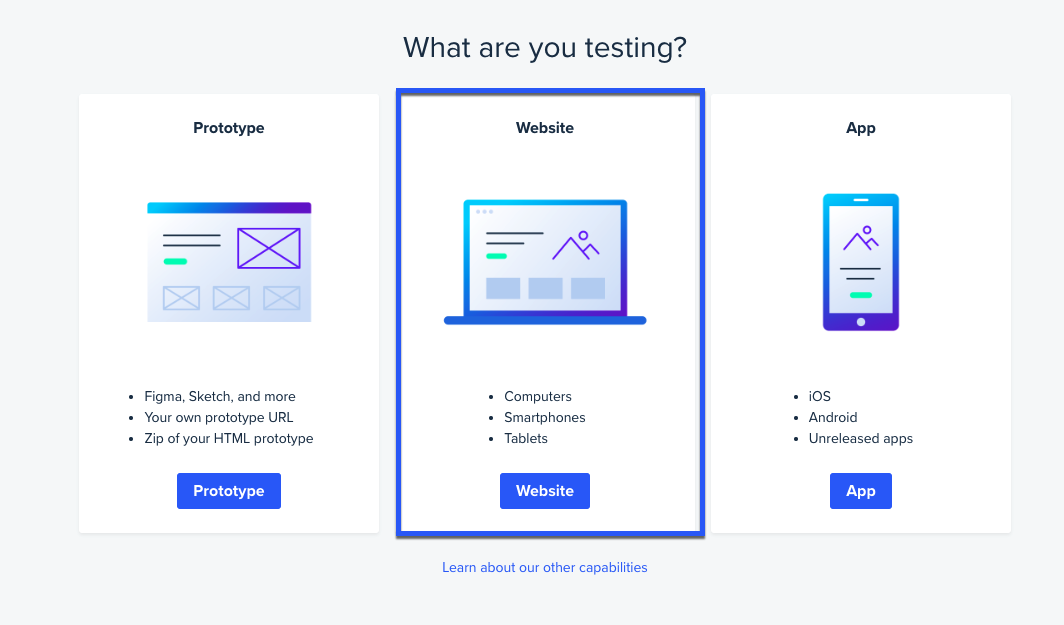

Most research tools help you run studies. Great Question does that too, but the real shift is what happens to all that research afterward. Every interview, survey response, prototype test, and card sort feeds into an AI-powered research repository that turns your accumulated research into a searchable knowledge base you can query in plain language.

Ask questions of all your past research. Instead of digging through folders of transcripts and slide decks, ask "What do enterprise users think about our onboarding flow?" and get answers with citations from specific interview moments across your full research history. Study #50 benefits from everything you learned in studies #1 through #49. No other tool on this list does this.

AI that finds what humans miss. The AI analysis identifies themes across interviews, surfaces contradictions between participants, and detects moments where users hesitate or backtrack. Asana's research cycles compressed from two weeks to two or three days.

Recruit from your own customers. Great Question connects directly to your CRM (Salesforce, HubSpot) and identifies participants from people who already use your product. Brex went from single-digit researchers to 100+ people running research company-wide because the platform made study design accessible to PMs and designers.

Every method, one participant pool. Unmoderated prototype testing, surveys, moderated interviews, AI-moderated interviews, card sorts, tree tests. One study's participants can be re-recruited for the next. One project's insights feed into the next.

The consolidation payoff. ServiceNow's research team went from 15 tools to 7. Recruiting dropped from 118 days to 6. That kind of reduction only happens when recruiting, methods, analysis, and your research knowledge base live in one place.

When to skip Great Question: You're exclusively running large-scale quantitative surveys with thousands of responses and need deep statistical modeling (Qualtrics territory). Or you're doing purely casual, ad hoc research where a platform investment doesn't make sense yet.

Dovetail is a research repository with AI analysis layered on top. Teams often evaluate it expecting a full research platform and discover it doesn't recruit participants, doesn't run studies, and doesn't manage your participant database.

Where it earns its spot: Cross-project synthesis. It ingests transcripts, videos, survey responses, even Slack messages and PDFs, then applies AI tagging with hierarchical coding. The AI distinguishes pricing objections from feature requests from usability confusion. For teams running 20+ studies a year, that first-pass tagging saves weeks of manual transcript review.

The gap: Dovetail treats recruitment, scheduling, incentives, and study management as someone else's problem. You're bringing data to Dovetail, not originating it there. (Full breakdown: Dovetail alternatives.)

Best for: Managing Dovetail is time-consuming, you'll need a full-time researcher on it. Skip if: Tool overhead is your main problem.

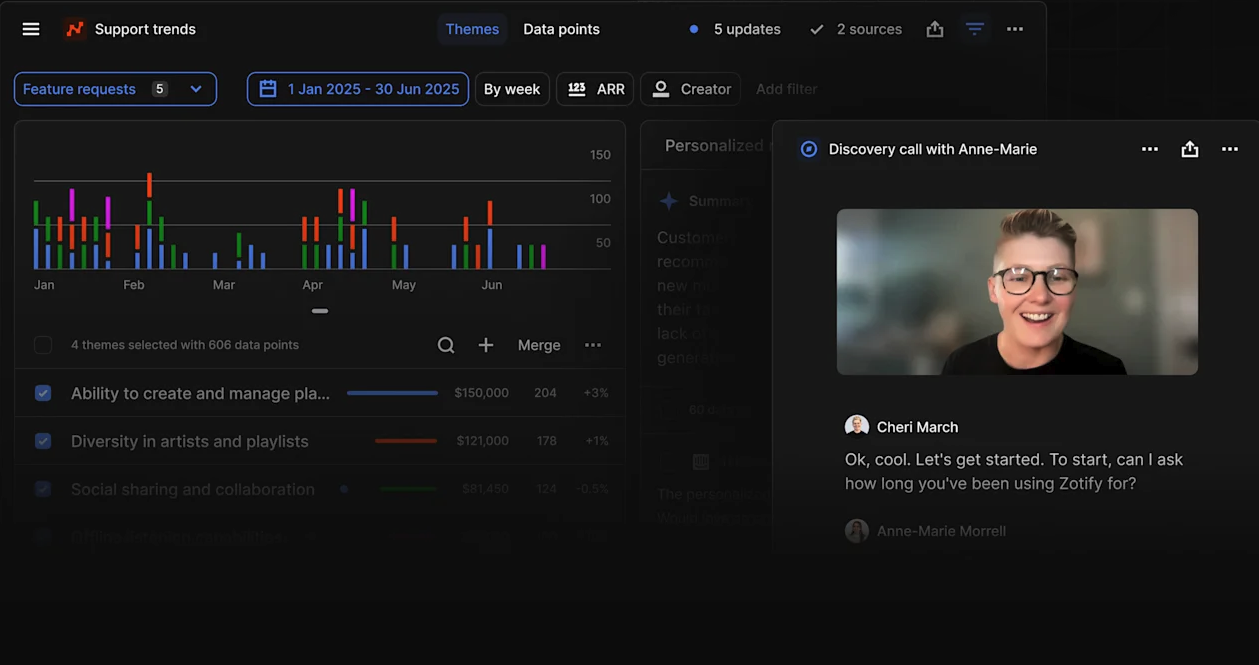

Maze started as a prototype testing tool but has grown into a broader research platform. It now covers surveys, card sorts, tree tests, prototype testing, and AI-moderated interviews. The AI moderator plans, runs, and analyzes interviews autonomously, asking follow-up questions, adapting across time zones, and grouping insights into themes.

Where it earns its spot: The combination of quantitative methods (prototype tests, surveys, card sorts, tree tests) and AI-moderated qualitative interviews in one platform. Access to 6 million+ participants means you can launch studies without a separate recruiting tool. AI-powered thematic analysis, sentiment detection, and automated reports speed up the analysis phase.

The gap: Maze doesn't offer a participant CRM for recruiting your own customers. You're using their panel or importing your own list, not building a compounding research relationship with your customer base. There's also no cross-study research repository where past research becomes queryable knowledge.

Best for: Product and design teams who want multiple methods plus AI interviews in one tool. Skip if: You need to recruit from your own customer base or want a AI research repository that compounds across studies.

Listen Labs replaces traditional moderated interviews with an AI interviewer that conducts, transcribes, and synthesizes conversations autonomously. Note, that Great Question also offers a robust AI moderated interview functionality.

You define your research goals, and the AI handles recruiting from a 30 million+ participant pool, runs in-depth interviews in 50+ languages, then delivers executive-ready reports with themes, highlight reels, and slide decks.

Where it earns its spot: Speed and scale for qualitative research. Instead of scheduling 10 interviews across two weeks, Listen runs them in parallel and delivers synthesized findings in hours. The AI asks follow-up questions, adapts to responses, and surfaces patterns across conversations.

The gap: Listen Labs is laser-focused on AI-moderated interviews. No surveys, no card sorts, no research repository for querying past studies. If your research program includes multiple methods, you'll need other tools alongside it. And because the AI conducts the interview, you lose the human interviewer's ability to pick up on body language and pursue unexpected tangents with genuine intuition.

Best for: Teams that run high-volume interview programs and want to scale qualitative research without scaling headcount.

Skip if: You need multiple research methods or want a repository where insights compound over time.

UserTesting has the largest participant panel: 2+ million people, but it comes at a high price.

Where it earns its spot: Panel diversity across demographics, income levels, and device types. AI-generated video highlights.

The gap: It's very pricey and has lock-in contracts. You're always recruiting strangers through UserTesting's pool, and every study starts from zero. (See: UserTesting alternatives.)

Best for: Fast access to external participants.

Skip if: You want to build compounding relationships with your own customer base.

Calling Hotjar a "research tool" is generous. It's behavior analytics with qualitative features bolted on: session replays, heatmaps, click patterns, and feedback surveys. The AI synthesizes themes across survey responses, and for continuous feedback programs with 100+ responses, that works reasonably well.

The gap: No interviews. No participant recruiting. No study management. Session replay tells you what happened. Feedback surveys tell you what people typed. Neither gives you the depth of a 45-minute moderated interview where you follow unexpected threads in real time.

Best for: Product teams wanting behavior data and feedback in one dashboard. Skip if: You need any actual research method beyond feedback surveys.

Lookback builds everything around the video interview. Scheduling, recording, transcription, AI-generated highlights and timestamps. For distributed teams where the interview recording is the primary artifact, it handles those logistics cleanly.

The gap: Everything after the recording. Analysis is thin. The Lookback-plus-Dovetail pairing is common, but it's two subscriptions for what should be a single workflow.

Best for: Teams doing primarily moderated research who already have analysis solved elsewhere.

Skip if: You want recording and analysis in the same tool.

Notably is the simplest analysis tool on this list. Import transcripts, tag highlights, generate themes. The AI assists with tagging suggestions without overriding human judgment. For teams of 3-10 researchers who want synthesis without Dovetail's complexity, the simplicity is the selling point.

The gap: No recruitment, no participant management, no research methods. It's a tool you'll outgrow once your team expands past 10 or your program matures beyond basic synthesis.

Best for: Small research teams (3-10) who want simple tagging. Skip if: You have 50+ research artifacts per quarter or want to avoid switching platforms in 12 months.

Tool administration is eating your time — You need a platform, not another point solution. Great Question covers recruiting, methods (including prototype testing), analysis, and repository in one place.

Your past research is trapped in silos — Great Question's AI repository lets you query across every study you've ever run. That's the difference between starting fresh each time and building on what you already know.

You need fast design validation and multi-method testing — Maze covers prototype tests, surveys, card sorts, tree tests, and AI interviews. Great Question also offers a robust prototype testing tool, and it works if you also want a participant CRM and cross-study repository.

You want to scale qualitative interviews without scaling headcount — Great Question offers AI moderated interviews in multiple languages.

You need fast access to external participants — User Interviews, which is integrated with Great Question.

You're doing product analytics, not research — Hotjar.

You're just getting started — Start with a platform that compounds. Teams that start with point solutions spend 12-18 months assembling a stack they'll eventually consolidate anyway.

AI moderated interviews are going mainstream. Tools that run AI-facilitated sessions where the AI follows a discussion guide, probes on interesting responses, and adapts in real time are moving from experiment to production. Listen Labs raised $69M on this thesis alone, Maze shipped an AI moderator, and Great Question's AI-moderated interviews let you run 50 sessions in parallel instead of scheduling ten across two weeks.

"Repository" is becoming a feature, not a category. Standalone repositories made sense when no platform offered built-in search and synthesis. Now that full-lifecycle tools include repositories, the standalone case gets harder to justify.

The 80% stat keeps climbing. According to User Interviews' State of UX Research report, 80% of researchers now use AI in some form, up 24% year over year.

Participant CRM is the overlooked differentiator. Most tools still treat recruiting as a panel problem. The teams seeing the biggest efficiency gains recruit from their own customer base through integrated CRMs.

You can. But if you're maintaining more than three research tools, you're spending more time on logistics than research. At that point, consolidation has clear ROI.

AI excels at consistency and first-pass speed: tagging, highlighting, theme suggestion. Humans are better at interpreting nuance and connecting findings to business context. Use AI as your first pass, then do synthesis and interpretation on top of AI-surfaced evidence. Don't skip the human layer.

Great Question integrates with CRMs (Salesforce, Snowflake), and Slack. Great Question also integrates with Figma, and has an MCP to embed research within Claude.

All major tools encrypt data in transit and at rest and claim GDPR/CCPA compliance. But most point solutions have no enterprise governance story. If you need SSO, audit logs, data residency controls, and advanced permissions.

Only if you're seeing specific bottlenecks: recruiting latency, analysis speed, or the compounding time cost of switching between platforms. But "works" and "we're used to it" aren't the same thing. Teams that consolidate often don't realize how much overhead they were absorbing until it's gone.

Tania Clarke is a B2B SaaS product marketer focused on using customer research and market insight to shape positioning, messaging, and go-to-market strategy.